You probably feel the tension already: product and engineering want to ship faster, security and compliance want fewer defects, and finance wants lower burn. QA and software testing automation services sit right in the middle of that triangle. Get them wrong and you either leak bugs into production or grind releases to a halt. Get them right and you release more often, with fewer incidents, at a lower long‑term cost. Table of Contents

-

1. Quick comparison table of QA and software testing automation services

-

2. Model 1 building in house QA and automation for full ownership

-

3. Model 2 outsourced QA and software testing automation service providers

-

4. Model 3 hybrid QA automation blending internal ownership and external muscle

-

5. How to choose the right QA and software testing automation model

-

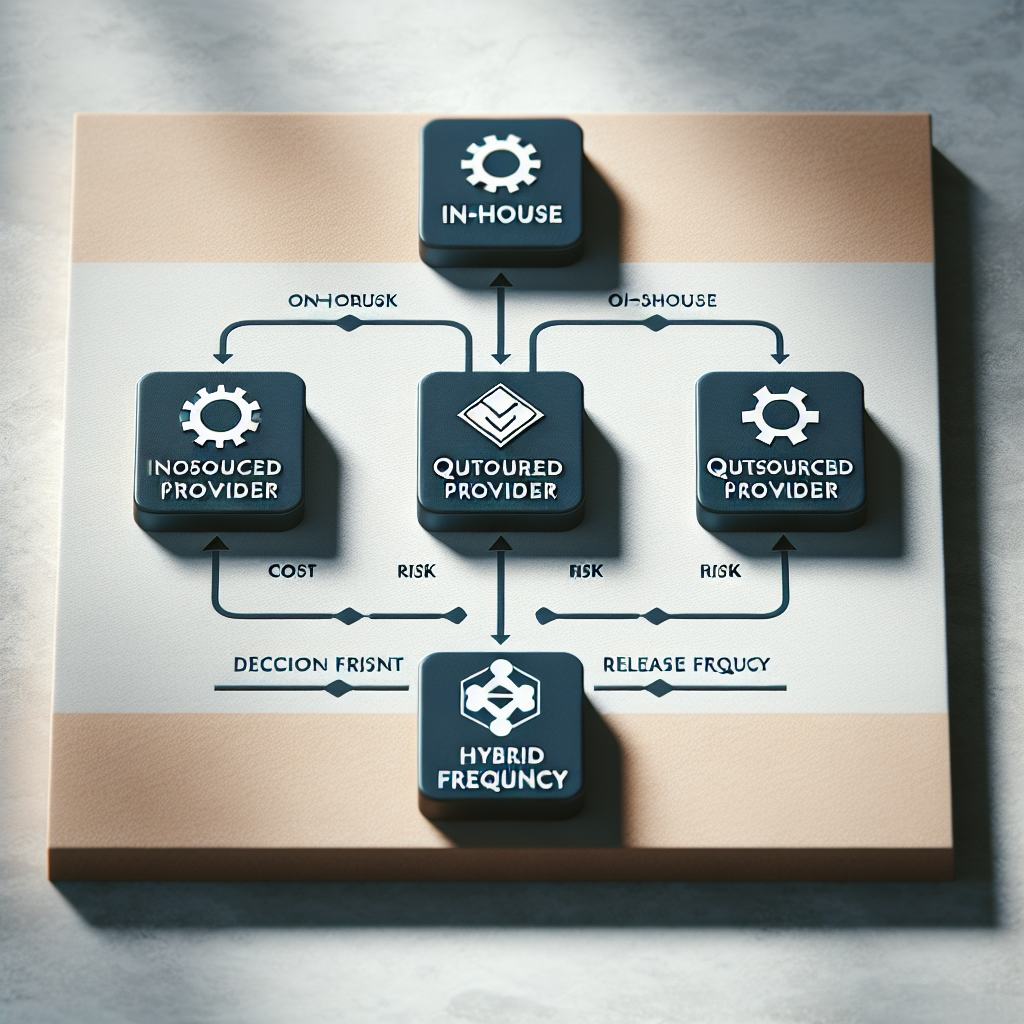

– – – – – ## Key Takeaways Option: Strengths – Weaknesses – Best For In‑house QA automation – Deep product knowledge, tight alignment with engineering, strong security control – Higher cost, slower to staff, risk of narrow tooling expertise – Regulated industries, complex products, long‑term stable roadmaps Outsourced QA automation services – Lower cost, faster ramp‑up, broad toolset experience, flexible scaling – Knowledge transfer overhead, vendor dependency, variable quality – Startups, cost‑constrained teams, aggressive release schedules

-

– – – – – – Hybrid QA automation model: Balance of control and flexibility, strong standards with elastic capacity – Requires good coordination and governance, not trivial to set up – Growing companies with recurring releases and mixed complexity

-

1. Quick comparison table of QA and software testing automation services

Before we get philosophical about QA strategy, it helps to see the trade‑offs side by side. Most teams I work with end up choosing one of three models for QA and software testing automation services: fully in‑house, fully outsourced to a specialist provider, or a hybrid setup with a core internal team plus external capacity.

None of these is universally "right." The wrong choice is usually the one that doesn’t match your release cadence, domain complexity, and budget reality.

Below is a practical comparison table you can scan in under a minute.

- In‑house focuses on depth and ownership, but you’ll pay in cash and time.

- Outsourced focuses on coverage and speed, but you’ll pay in coordination.

- Hybrid tries to be boringly smart: internal brains, external bandwidth.

| Model | Cost Profile | Speed to Ramp | Knowledge Depth | Scalability | Security & Control | Typical Tooling | Best For |

|---|---|---|---|---|---|---|---|

| In‑house QA automation | Highest (salaries, benefits, tooling, training) | Slow (3–6 months to hire and onboard) | Deep product and domain knowledge | Moderate (limited by hiring capacity) | Highest control over data and process | Custom frameworks, tightly coupled CI/CD | Regulated or complex products with stable roadmaps |

| Outsourced QA automation services | Lower per‑hour cost, predictable engagement fees | Fast (2–6 weeks to onboard) | Shallower domain knowledge initially | High (scale up/down with contract) | Medium (requires NDAs, access policies) | Off‑the‑shelf tools plus provider frameworks | Startups, SMBs, or teams under cost and time pressure |

| Hybrid QA automation | Mid‑range, better cost/coverage balance | Medium (core team + provider ramp) | Good depth plus external breadth | High, with internal governance | High, if access is segmented | Mix of internal and provider tooling | Growing companies needing both speed and control |

Pro tip: If you’re unsure, model a single product squad first and compare defect rates, release frequency, and total QA cost across 2–3 sprints before scaling a model company‑wide.

2. Model 1 building in house QA and automation for full ownership

In‑house QA and software testing automation services mean you hire your own SDETs, test architects, and QA engineers, and they live inside your org chart. They attend your standups, argue with your developers about flaky tests, and build frameworks tailored to your stack.

The upside? Ownership and context. Your QA folks actually understand why a specific workflow matters to a certain customer segment. They can say, "No, that change is risky during quarter‑end" because they’ve seen what happens when billing goes wrong.

The downside is obvious and slightly painful: cost and speed. According to salary benchmarks in the U. S., experienced automation engineers typically sit in the upper quartile of engineering pay bands, and you still need tooling, training, and time before they’re productive. And if you’re in a competitive tech market, hiring them can feel like a never‑ending interview loop.

From a quality perspective, in‑house QA tends to produce more stable automation suites over time. Why? Because the people writing tests also live with the long‑term maintenance pain. They refactor. They delete brittle tests. They care if your CI pipeline takes 90 minutes.

But in‑house teams can also become insular. I’ve seen internal QA staff cling to a legacy Selenium framework long after Cypress or Playwright would’ve halved their flakiness and runtime, just because "we’ve always done it this way."

On the security and compliance side, in‑house is usually the easiest to justify. Auditors like clear boundaries. Your data doesn’t leave your own systems, access can be tightly controlled, and your InfoSec team knows exactly who to talk to when they’re worried.

One more nuance: if your product is technically complex (think medical devices, trading platforms, or anything touching critical infrastructure), in‑house expertise isn’t a luxury; it’s survival. Third‑party teams often underestimate domain edge cases. You can’t really afford that.

Well, actually, there is one big catch: capacity. When your product roadmap spikes, your QA coverage often lags behind because you simply can’t hire fast enough. That’s when regression cycles slip and production incidents spike.

-

Pros:

-

Deep understanding of product, users, and domain nuances

-

Strong alignment with engineering and product teams

-

Highest control over security, data, and compliance obligations

-

Better long‑term maintainability of automation suites

-

Clear ownership for quality metrics and standards

-

Cons:

-

High fixed costs for salaries, benefits, and tooling

-

Slow to ramp up capacity during busy periods

-

Hard to cover every tech stack and device type in‑house

-

Risk of stagnation in tooling and practices if leaders aren’t curious

-

Potential burnout in small teams supporting many products

- Best for: regulated industries (fintech, health, gov), where data residency and auditability matter more than pure speed.

- Best for: complex, long‑lived platforms with intricate business rules and lots of hidden edge cases.

- Best for: organizations that already have mature engineering practices and can attract good QA talent.

Pro tip: If you go in‑house, treat your QA automation framework like a product: maintain a roadmap, backlog, and metrics (fail rates, runtime, flaky tests) so it doesn’t rot quietly in the corner.

3. Model 2 outsourced QA and software testing automation service providers

Outsourced QA and software testing automation services mean you bring in a specialized provider—often with offshore or nearshore teams—to design, build, and run your test automation. Think companies that live and breathe tools like Playwright, Cypress, Appium, TestComplete, plus CI orchestration with Jenkins, GitHub Actions, or GitLab CI.

The appeal is obvious: variable cost and speed. You don’t wait four months to hire; you sign an MSA and you’re running discovery workshops two weeks later. For early‑stage startups or lean product teams, this is often the difference between having automation and having none at all.

Pricing tends to be flexible: fixed‑price projects for initial framework setup, T&M for ongoing regression coverage, or dedicated pods of automation engineers. In offshore setups, effective hourly rates can be 40–60% lower than onshore hires, though you need decent management to avoid quality dips.

And yes, quality does vary. I’ve seen excellent outsourced teams who essentially become an extension of engineering, and I’ve seen others who spam UI tests that break every sprint. The annoying thing about low‑quality providers is they create more work than they remove—flaky tests, poor assertions, zero documentation.

Where outsourced QA shines is breadth. Providers that work across many clients see patterns. They know what breaks on specific mobile OS versions, what anti‑automation traps certain SaaS vendors use, and how to integrate testing into different styles of CI/CD pipelines. They’ll often bring pre‑built libraries and utilities to speed things along.

There’s also a knowledge transfer tax. You’ll need to explain your domain, users, environments, data constraints, and regulatory boundaries. The first 4–6 weeks can feel slower than just "doing it yourself." But if you’re honest about that ramp‑up period, the payoff often comes in month two and three when regression cycles stop blocking releases.

Security and compliance are the big, justified worries. You’ll be granting access to staging, sometimes production logs, and maybe non‑anonymized data (ideally not, but it happens). You’ll want a provider comfortable with NDAs, SOC 2 or ISO 27001 norms, and sane access control. Even Wikipedia’s overview of software testing highlights how test environments and data need careful handling to avoid privacy issues.

One more point: outsourced doesn’t mean you abdicate strategy. The companies that get burned are the ones who toss QA over the wall and hope. You still need a product or engineering leader owning the testing strategy, defining risk levels, and deciding what success looks like.

-

Pros:

-

Faster ramp‑up and flexible scaling compared to pure in‑house hiring

-

Lower effective cost, especially with offshore or nearshore teams

-

Access to broad tooling experience and battle‑tested patterns

-

Ability to spin up specialized skills (performance, security, mobile) on demand

-

Good option when engineering leadership is already stretched thin

-

Cons:

-

Requires ongoing coordination and clear requirements to avoid misalignment

-

Initial knowledge transfer slows down early delivery

-

Risk of vendor lock‑in if frameworks and tooling are opaque

-

Variable quality across providers; due diligence really matters

-

Security, data access, and compliance require careful governance

- Best for: startups and SMBs that need QA automation before they can afford a full in‑house team.

- Best for: companies with aggressive release cadences that can’t slow down for a long hiring cycle.

- Best for: organizations experimenting with new tech stacks where external experts already have playbooks.

Pro tip: Ask any outsourced provider for flakiness metrics, typical test execution times, and example reports from other projects—if they can’t share anonymized samples, that’s a red flag.